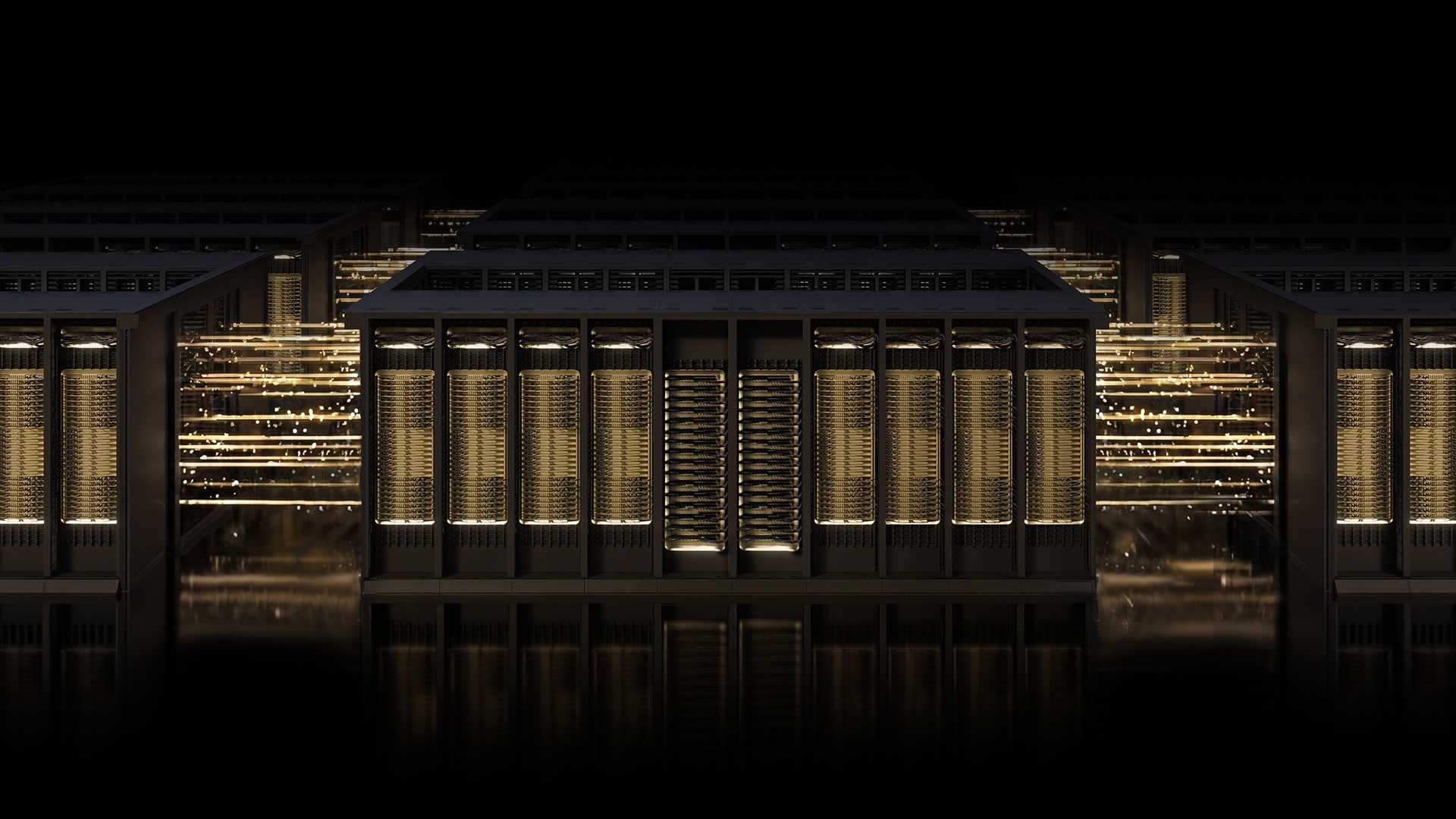

How NVIDIA Spectrum-X and MRC Are Redefining AI Networking

In the race to build the most powerful AI factories, networking is the invisible backbone that can make or break performance. NVIDIA's Spectrum-X Ethernet fabric, now enhanced with Multipath Reliable Connection (MRC), is setting new standards for gigascale AI. This Q&A explores the technology, its benefits, and the industry leaders—OpenAI, Microsoft, and Oracle—who are already leveraging it to train frontier models. Learn how MRC turns a single-lane network into a smart, multi-path grid that keeps GPUs fed and training runs efficient. What is Spectrum-X? What is MRC? How does it work?

What is NVIDIA Spectrum-X Ethernet?

NVIDIA Spectrum-X is an open, AI-native Ethernet fabric designed specifically for large-scale AI workloads. It goes beyond traditional networking by combining purpose-built hardware, deep telemetry, and intelligent fabric control. This stack ensures ultra-low latency, high throughput, and resilience—qualities essential for training massive AI models that run for days or weeks. Spectrum-X is deployed by industry giants like OpenAI, Microsoft, and Oracle, who rely on its ability to scale to tens of thousands of GPUs without compromising performance. Its openness allows integration into diverse data center environments, and it's built to support emerging protocols like Multipath Reliable Connection (MRC).

What is Multipath Reliable Connection (MRC)?

Multipath Reliable Connection (MRC) is an RDMA transport protocol that enables a single connection to distribute traffic across multiple network paths simultaneously. Developed collaboratively by NVIDIA, Microsoft, and OpenAI, MRC replaces the old single-path approach with a multi-lane strategy. Think of it like upgrading from a single road through a town to an interconnected street grid with a real-time traffic app: data can dynamically reroute around congestion or failures. This improves throughput, load balancing, and availability for AI training fabrics. MRC was first proven in production on NVIDIA Spectrum-X hardware and is now released as an open specification through the Open Compute Project.

How does MRC improve AI training performance?

MRC directly tackles three major network bottlenecks: congestion, load imbalance, and packet loss. By spreading traffic across all available paths, it avoids overloaded links and keeps GPU utilization high. Even under congestion, it dynamically reroutes data in real time to maintain bandwidth. When packet loss occurs—inevitable in long-running jobs—MRC uses intelligent retransmission to recover rapidly with minimal impact. This prevents GPU idle time, a critical factor given that AI training costs millions per day. As Sachin Katti of OpenAI noted, MRC's end-to-end approach avoided typical network slowdowns and interruptions, maintaining frontier training efficiency. The result is faster training completion and lower total cost of ownership.

Who are the early adopters of MRC?

The technology has been embraced by some of the most demanding AI organizations. OpenAI deployed MRC in its Blackwell-generation infrastructure, co-developed with NVIDIA. Microsoft integrated MRC into its Fairwater data center, one of the largest AI factories built for training frontier LLMs. Oracle Cloud Infrastructure's Abilene data center also relies on MRC to deliver performance, scale, and efficiency. These adopters demonstrate that MRC is not a theoretical concept but a proven solution that enables gigascale AI production. Their collaborations highlight a shared commitment to advancing networking for the next generation of AI.

How does MRC achieve high GPU utilization?

GPU utilization is the holy grail of AI training, and MRC optimizes it by ensuring every GPU gets the bandwidth it needs throughout a training run. It load-balances traffic across all network paths, preventing any single GPU from being starved due to congestion on a specific route. When some paths slow down, MRC shifts traffic to healthier ones in real time, avoiding bottlenecks. Additionally, intelligent retransmission minimizes the impact of short-lived interruptions, so GPUs don't sit idle waiting for retransmitted data. Administrators gain fine-grained visibility into traffic paths, allowing them to monitor and fine-tune performance. This holistic approach helps maximize the utilization of expensive GPU clusters.

Why was MRC released as an open specification through OCP?

By releasing MRC as an open specification via the Open Compute Project, NVIDIA, Microsoft, and OpenAI signal that this protocol is for the entire industry. Open standards foster innovation, interoperability, and wider adoption. It allows other hardware vendors and data center operators to implement MRC without being locked into a single vendor. The openness also accelerates the evolution of AI networking, as the community can contribute improvements. This move aligns with NVIDIA's strategy for Spectrum-X: an open, AI-native fabric that sets the standard. MRC's journey from concept to production to open specification exemplifies the collaborative spirit needed to solve the unique challenges of gigascale AI.

How does Spectrum-X hardware support MRC?

NVIDIA Spectrum-X Ethernet hardware is purpose-built to take full advantage of protocols like MRC. Its switches and SmartNICs incorporate deep telemetry and intelligent fabric control that work in tandem with MRC's multi-path logic. This hardware-software co-design enables real-time monitoring of network conditions and rapid adaptation. The fabric control layer orchestrates traffic across thousands of links, while telemetry provides administrators with actionable insights. Without this optimized hardware, MRC's potential would be limited. Spectrum-X ensures that the protocol's theoretical benefits—high GPU utilization, congestion avoidance, and reliability—are realized in practice at massive scale.

Related Articles

- 10 Key Insights Into the Smartphone Price Surge: RAM Crisis Hits OnePlus, Nothing, and More

- Streamlining LDAP Secrets Management with Vault Enterprise 2.0: Key Questions Answered

- 10 Critical Risks of Hiding Bluetooth Trackers in Mail: Lessons from the Dutch Navy Incident

- 5 Reasons to Skip the 2026 Motorola Razr and Grab Last Year's Model at a Steal

- Why Buying Last Year's Motorola Razr Ultra for Half Price Beats the Latest Model

- Navigating the Mac Mini Price Hike: A Step-by-Step Guide to Making an Informed Purchase

- IBM Vault Enterprise 2.0 Revolutionizes LDAP Secrets Management with Automated Rotation and Least Privilege

- How to Discreetly Embed a Bluetooth Tracker in a Postcard for Mail Tracking