Unveiling the Magic: How Spotify's 2025 Wrapped Works

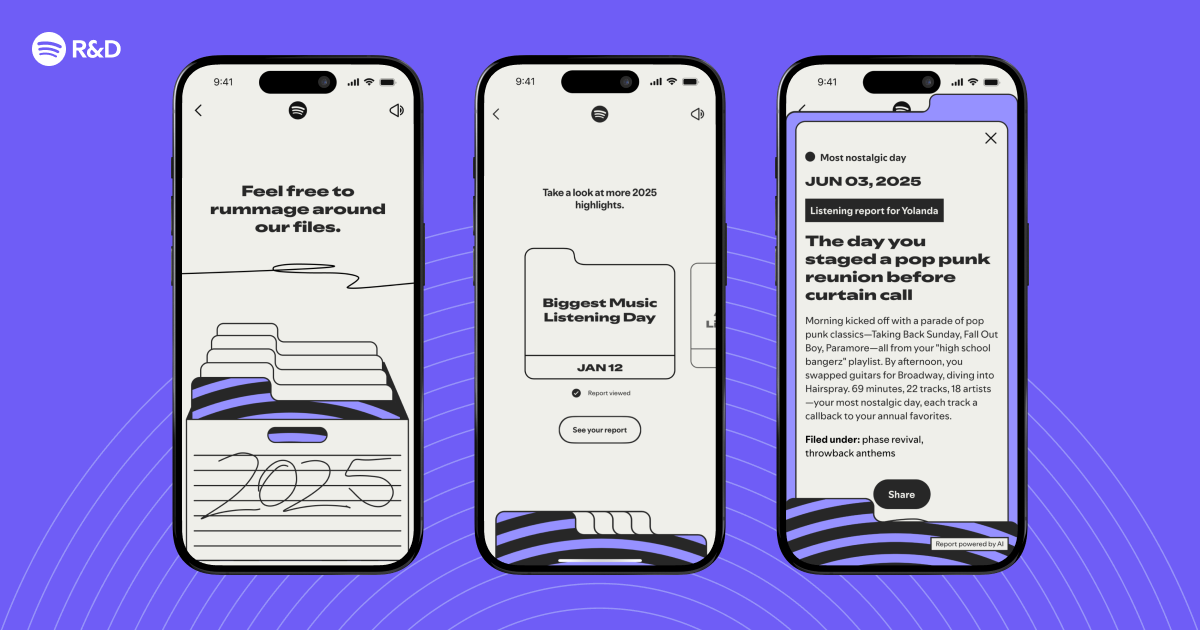

Ever wonder how Spotify transforms your year of listening into a personalized story? The 2025 Wrapped experience goes beyond simple stats—it's a technical marvel of data science, machine learning, and engineering. Here’s an inside look at the tech that makes your highlights possible.

1. How does Spotify identify your most interesting listening moments?

Spotify’s algorithms analyze patterns in your listening behavior throughout the year. They look for anomalies—like a sudden change in genre, a late-night playlist discovery, or a repeated track that becomes your anthem. Using clustering techniques and sequence modeling, the system pinpoints moments that deviate from your usual habits. These are the gem-like stories—like discovering a new artist during a road trip or revisiting an old favorite—that make your Wrapped feel personal.

2. What machine learning models power the personalization in Wrapped?

Multiple models work together. Collaborative filtering groups you with users who have similar tastes, while natural language processing (NLP) analyzes song lyrics and podcast content to understand mood and context. A special deep learning architecture called Temporal Attention Network weighs how your listening changes over time. This allows the system to rank moments by significance, choosing which stories to highlight—like your top genre shift or most-listened-to podcast episode.

3. How does Spotify handle the massive scale of data for Wrapped?

With over 500 million users, processing an entire year’s worth of streams requires a robust distributed computing pipeline. Spotify uses Apache Beam for batch processing and TensorFlow for model training. Data is partitioned by region and time windows, then aggregated in parallel on Google Cloud infrastructure. Caching and sharding strategies ensure that the final summary can be generated quickly, often within hours, despite petabytes of log data.

4. Can you explain how the 'audio aura' or mood analysis works in 2025 Wrapped?

The audio aura, a new feature in 2025, uses real-time acoustic analysis. Spotify extracts features like tempo, key, danceability, energy, and valence from each track using its Audio Features API. These are fed into a neural network that clusters songs into mood palettes—such as “calm blue” or “energetic orange.” Your aura is a dynamic blend of your most-listened moods, visualized as a colored gradient. The algorithm runs this for each user’s top 100 songs, creating a unique fingerprint.

5. What technical challenges did engineers face building Wrapped 2025?

One major challenge was data freshness vs. computation time. Wrapped is static (cut off in late November), but users expect up-to-date insights. Engineers had to design a cutoff period that balances completeness and processing speed. Another hurdle was privacy: generating personalized stories without exposing raw data to teams. Spotify uses differential privacy techniques, adding noise to aggregate stats to prevent re-identification. Also, rendering the interactive UI for millions of users simultaneously required a highly optimized content delivery network (CDN) with lazy loading.

6. How does Spotify decide which new features to include each year?

The team evaluates engagement metrics from previous years and runs A/B tests on early prototypes. For 2025, they added the “audio aura” and a “listening lineage” feature that traces how your taste evolved. They also monitor social media buzz—like #Wrapped trends—to see what users love most. Engineering feedback on data availability and computational cost also shapes the final lineup. Features that resonate and are technically feasible get greenlit.

7. What's the future of Wrapped? Any hints for 2026?

Based on Spotify’s patents, future Wrapped could integrate real-time collaborative playlists or even AI-generated narrators that describe your year in voice. There’s talk of using federated learning to improve personalization without centralizing user data. Also, expect more cross-platform integration—like syncing with your fitness tracker for “workout anthems.” The core goal remains: tell richer, more surprising stories from your listening life.

8. Where can I learn more about the engineering behind Wrapped?

Spotify Engineering publishes deep dives on their official blog. Check out posts on audio feature analysis, scalable data pipelines, and model interpretability to go deeper. The original article Inside the Archive: The Tech Behind Your 2025 Wrapped Highlights is a great starting point. For open-source code, explore Spotify’s GitHub repositories like Spotify-Python and TFX.

Related Articles

- Decoding Your 2025 Spotify Wrapped: The Engineering Behind the Magic

- 10 Secrets Behind the Scenes: How Spotify Wrapped 2025 Highlights Are Engineered

- Texas Lawsuit Accuses Netflix of Data Spying and Addictive Design

- How AI Agents Are Reshaping Software Development: Insights from Spotify and Anthropic

- Decoding Your Year in Sound: A Technical Guide to Spotify Wrapped 2025 Highlights

- 10 Key Insights from the Spotify-Anthropic Live Discussion on Agentic Development

- 10 Critical Design Rules for Stable Streaming Interfaces

- Hulu Drops Surprise 'The Bear' Prequel Episode ‘Gary’ Ahead of Final Season